Fitting a line with multiple variables

We often perform line fitting (linear regression) for two variables x and y to observe correlation between x and y. This is such a ubiquitous functionality that we can even do this with Excel.

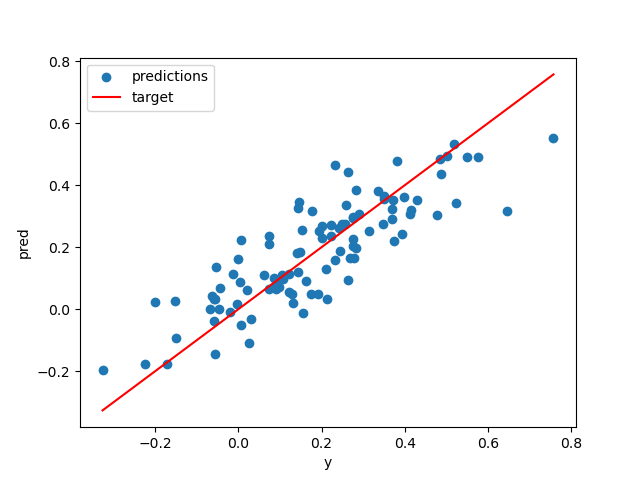

In practice, however, there are often multiple variables that we need to fit. The natural extension is then to solve for Ax + b = y where the matrix A and the target vector y are given, and we want to find a vector x and a scalar b that best fits the equation.

If you search the web, we will find explanations on how to solve Ax = y but not with the bias term b. In numpy, there is a function numpy.linalg.lstsq which solves Ax = y without the bias term b. How do we then solve it with the bias b?

The trick is to transform Ax + b into A'x' where A' = [A; 1] and x' = [x; b]. That is, we concatenate a single-column vector filled with 1 to A, and concatenate a row with the bias b to x. Then, we can solve A'x' = y with, say numpy.linalg.lstsq function and extract x and b from x'.

Check out this repo for a simple example script