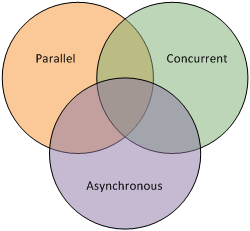

Concurrent, Parallel, Async

These three concepts are related but not the same. Let’s see how they are related but different.

Concurrent programming means that multiple tasks are executed in overlapping time window. Concurrency does not imply parallel programming. Consider a single-core computer. How does it do multi-tasking (i.e., concurrency)? By performing one task for a small amount of time, pause and move to a different task, run for some time and pause, and so on. It doesn’t run multiple tasks simultaneously per se, but this task switching gives the impression of running multiple tasks at the same time to the user.

Parallel programming means utilizing multiple computation units simultaneously. This obviously requires multiple cores. It may sound strange, but parallel programming does not imply concurrency either, because one can chop-off a single task into multiple sub-tasks and run them in parallel. This still runs only a single-task at a time, so this is not a concurrent programming. For example, a matrix multiplication can be parallelized by computing inner product of every <row, column> pairs independently.

Finally, asynchronous programming is a related but different concept to concurrency. It means that an operation is non-blocking, i.e., the computational resource is handed back to the caller, until the operation is complete. Asynchronous paradigm is typically used as a technique for achieving efficient concurrency.

Concrete C++ Example

Let’s take a look at a concrete C++ example to understand the concepts better. Say your job is to add two values:

- the first value needs to be downloaded, which takes about 100ms

- second value needs to be computed, which takes around 50ms

For simplicity, let’s assume the addition at the end is instantaneous. How long would it take for your computer to finish?

Let’s program this in ordinary synchronous manner.

#include <iostream>

#include <chrono>

#include <thread>

int io_operation() {

std::cout << "downloading data...\n";

std::this_thread::sleep_for(std::chrono::milliseconds(100));

return 1;

}

int intensive_task() {

std::cout << "cpu at full blast...\n";

auto sum = 0;

for (std::size_t idx = 0; idx < 100000000; ++idx) {

++sum;

}

return 0;

}

int main() {

auto x = io_operation();

auto y = intensive_task();

std::cout << x + y << "\n";

return 0;

}

Let’s measure the time it takes to run.

$ time ./a.out

downloading data...

cpu at full blast...

1

real 0m0.156s

user 0m0.055s

sys 0m0.000s

So, it takes about 150ms, which is simply the amount of time from each operation combined. No surprise here.

Can you do it more efficiently? We can employ parallel paradigm —run both tasks simultaneously using two separate threads.

#include <iostream>

#include <chrono>

#include <thread>

#include <future>

int io_operation() {

std::cout << "downloading data...\n";

std::this_thread::sleep_for(std::chrono::milliseconds(100));

return 1;

}

int intensive_task() {

std::cout << "cpu at full blast...\n";

auto sum = 0;

for (std::size_t idx = 0; idx < 100000000; ++idx) {

++sum;

}

return 0;

}

int main() {

auto x = std::async(std::launch::async, io_operation);

auto y = intensive_task();

std::cout << x.get() + y << "\n";

return 0;

}

Here, std::async(...) creates a new thread that executes io_operation(). Later when we call x.get(), we wait for the thread to complete. Wit this, let’s see how long it takes on a multi-core processor

$ time ./a.out

cpu at full blast...

downloading data...

1

real 0m0.104s

user 0m0.059s

sys 0m0.000s

Great, we can run it in 100ms, saving 50ms in runtime. Here is a question.

What if we only have a single core?

Computer resources are not free; it would be great if we have another core to throw at, but what if we don’t? Well, here comes asynchronous paradigm to rescue! Notice the function we are using: std::async, which looks a lot like asynchronous. The same code above shall work just fine even if we only have a single core. Don’t believe me?

$ time taskset -c 0 ./a.out

cpu at full blast...

downloading data...

1

real 0m0.116s

user 0m0.057s

sys 0m0.000s

Here, taskset -c 0 forces our program to use only a single-core. And yet, we still save about 40ms of runtime compared to the synchronous single-threaded code. This is possible because the io_operation() barely uses the CPU, so the operating system assigns most of its CPU resource into the second task. Though a bit slower (~10ms) than the parallel version, it runs quite efficient considering that we are employing only a single core here.

This demonstrates both asynchronous and concurrent programming. It is asynchronous because io_operation() does not block intensive_task(). At the same time, it is concurrent programming because the two tasks’ execution windows overlap. Finally, the code runs either parallel or non-parallel, depending on whether we have multiple cores at disposal.