Speed up your server — 2

Today, let’s continue our exploration on how to speed up our server. In the previous article, we used corotines to speed up the server…

Speed up your server — 2

Today, let’s continue our exploration on how to speed up our server. In the previous article, we used corotines to speed up the server that mainly performs non-CPU-bound jobs, such as input/output tasks. In this article, we will discuss how to speed up the server when it is performing CPU-intensive jobs.

Baseline

To simulate CPU-intensive tasks, let’s implement a server that calculates a Fibonacci number. Again, we will use FastAPI and Uvicorn framework for simplicity, but feel free to implement using your favorite programming language and server framework.

# baseline.py

from fastapi import FastAPI

import time

app = FastAPI()

def fibo(n: int) -> int:

if n <= 1:

return n

return fibo(n - 2) + fibo(n - 1)

@app.get("/baseline/fibo/{n}")

def baseline_fibo(n: int):

x = fibo(n)

return f"{x}"

Let’s fire up the server and measure the latency by sending total 100 requests with 10 concurrent requests with n of 30.

# deploy the server

fastapi run baseline.py

# benchmark from a client system

ab -n100 -c10 -e baseline.csv SERVER_IP:8000/baseline/fibo/30

Parallelization

In today’s world, practically all systems are equipped with plethora of cores that can perform tasks in parallel. Naturally, we shall utilize all cores available to run the server.

In Python, this is easy with concurrent.futures library. In particular, we will instantiate a ProcessPoolExecutor and use it to submit CPU-intensive task, e.g., fibo() function. Specifically, we use asyncio library’s get_event_loop() function to obtain the loop and submit the task using run_in_executor() method. Note that this method is an async function and hence requires await keyword.

Below shows the parallel implementation just described.

--- before

+++ after

@@ -1,7 +1,11 @@

from fastapi import FastAPI

import time

+from concurrent.futures import ProcessPoolExecutor

+import asyncio

+

app = FastAPI()

+executor = ProcessPoolExecutor()

def fibo(n: int) -> int:

@@ -14,3 +18,10 @@

def baseline_fibo(n: int):

x = fibo(n)

return f"{x}"

+

+

[email protected]("/par/fibo/{n}")

+async def par_fibo(n: int):

+ loop = asyncio.get_event_loop()

+ x = await loop.run_in_executor(executor, fibo, n)

+ return f"{x}"

Alright, let’s fire up the server and run the same benchmark as before.

ab -n100 -c10 -e par.csv SERVER_IP:8000/par/fibo/30

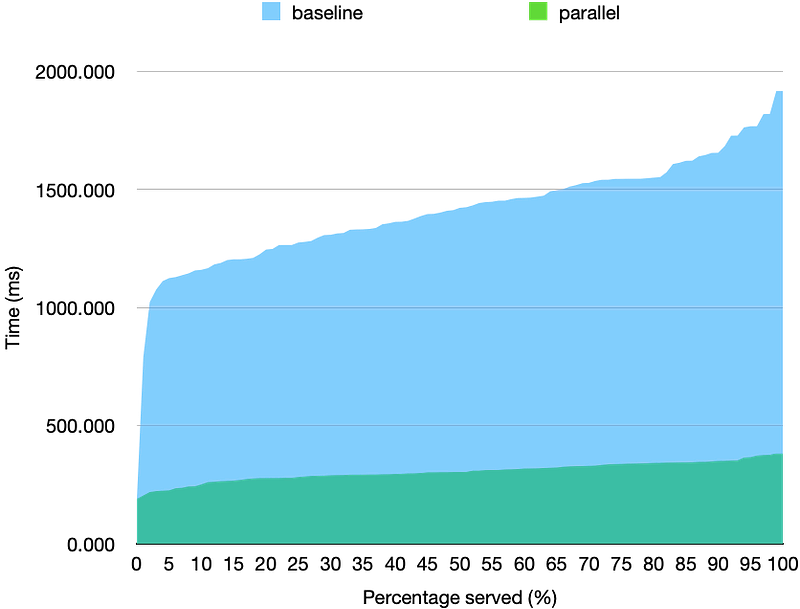

We observe about 5x difference between the baseline vs parallel implementation at p95. Given that the server was running on a 6-core system, this scalability is not perfect but acceptable.

Today, we explored how to distribute the server’s CPU-intensive work load into a process pool to achieve scalability. In the next article, we will look into how to speed up the sever by employing coroutines in parallel, so stay tuned!