Llama.cpp Benchmark: CPU vs iGPU

I tested the inference speed of Llama.cpp on my mini desktop computer equipped with an AMD Ryzen 5 5600H APU. This processor features 6 cores (12 threads) and a Radeon RX Vega 7 integrated GPU. While neither the CPU nor GPU is particularly high-performance, I wanted to compare their capabilities in running Llama.cpp.

CPU Benchmark: Ryzen 5 5600H (6 Cores)

To begin, I tested Llama.cpp using the CPU only. Below are the steps I followed to build and run the benchmark:

# Download the model

wget https://huggingface.co/TheBloke/Llama-2-7B-GGUF/resolve/main/llama-2-7b.Q4_0.gguf

# Clone the repository

git clone https://github.com/ggerganov/llama.cpp

cd llama.cpp

mkdir build

cd build

# Build with Release configuration

cmake .. -DCMAKE_BUILD_TYPE=Release

make

# Run benchmark

llama-bench -m ../../llama-2–7b.Q4_0.gguf

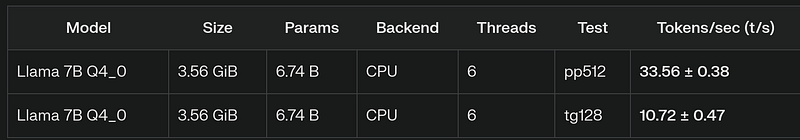

The benchmark utilized all six CPU cores (without hyperthreading). Here are the results:

iGPU Benchmark: Radeon RX Vega 7

Next, I tested Llama.cpp with GPU support enabled using Vulkan. The build process included the following modifications:

# Build with Vulkan support

cmake .. -DGGML_VULKAN=on -DCMAKE_BUILD_TYPE=Release

make

# Run benchmark with layers loaded into GPU

llama-bench -m ../../llama-2–7b.Q4_0.gguf -ngl 100

Key differences from the CPU test:

- Vulkan support was enabled using the

-DGGML_VULKAN=onflag. - All model layers were loaded into the GPU using

-ngl 100.

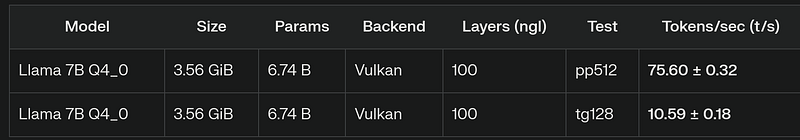

The results were as follows:

Observations and Analysis

Performance Gains:

- The prompt processing test (pp512) showed a 2x speedup on the iGPU compared to the CPU (from ~34 t/s to ~76 t/s).

- However, token generation (tg128) performance remained nearly identical (~10 t/s).

Power Efficiency:

- The iGPU consumed noticeably less power, as evidenced by reduced fan noise during operation.

- This suggests that the iGPU build is more energy-efficient than the CPU-only build.

System Utilization:

- With iGPU handling inference tasks, the CPU remained largely idle, allowing other CPU-intensive processes to run concurrently.

Conclusion

While the iGPU provided significant improvement in prompt processing speed, its impact on token generation was minimal — contrary to expectations of a proportional gain across both metrics.